This lesson is simply meant to acquaint yourself with the USAJobs.gov website, as it appears to the general user, and then the API, and how the two data systems are similar and different. More importantly, it's a casual demonstration of how to test things with what you're familiar with, i.e. using the web browser to test out the API and to closely examine how the URLs are constructed. At the end, I present some sample Python that can be used to download the site programmatically.

- About USAJobs.gov

- The user-friendly website

- The developer-friendly API

- The Query String

- Play with Python

About USAJobs.gov

From USAJobs.gov's About page:

USAJOBS.gov is a free web-based job board enabling federal job seekers access to thousands of job opportunities across hundreds of federal agencies and organizations, allowing agencies to meet their legal obligation (5 USC 3327 and 5 USC 3330) of providing public notice for federal employment opportunities.

As the Federal Government's official source for federal job listings and employment opportunity information, USAJOBS.gov provides a variety of opportunities. To date, USAJOBS has attracted over 17 million job seekers.

Sounds pretty spiffy. Like Craigslist, USAJobs.gov is a site that has a tremendous amount of useful content and makes it easy to access, and yet we can think of ways that we could remix the data for our own needs. Unlike Craigslist, USAJobs.gov encourages the reuse of its data and toward that end, has created an API:

The target is to provide Public Jobs to Commercial Job Boards, Mobile Apps and Social Media. These consumers typically require a more lightweight data definition than typically presented on USAJOBS.

The user-friendly website

Before we look at the API, it's best to explore the site as a typical web user.

The homepage is at https://www.usajobs.gov/. The user is presented with a couple of ways to customize the search: by keyword and by location:

For this example, I'm going to search for the location of "Idaho".

Search results page

Notice where "Idaho" appears in the URL (I've omitted the www.usajobs.gov part for brevity):

/Search?Keyword=&Location=Idaho&search=Search&AutoCompleteSelected=false

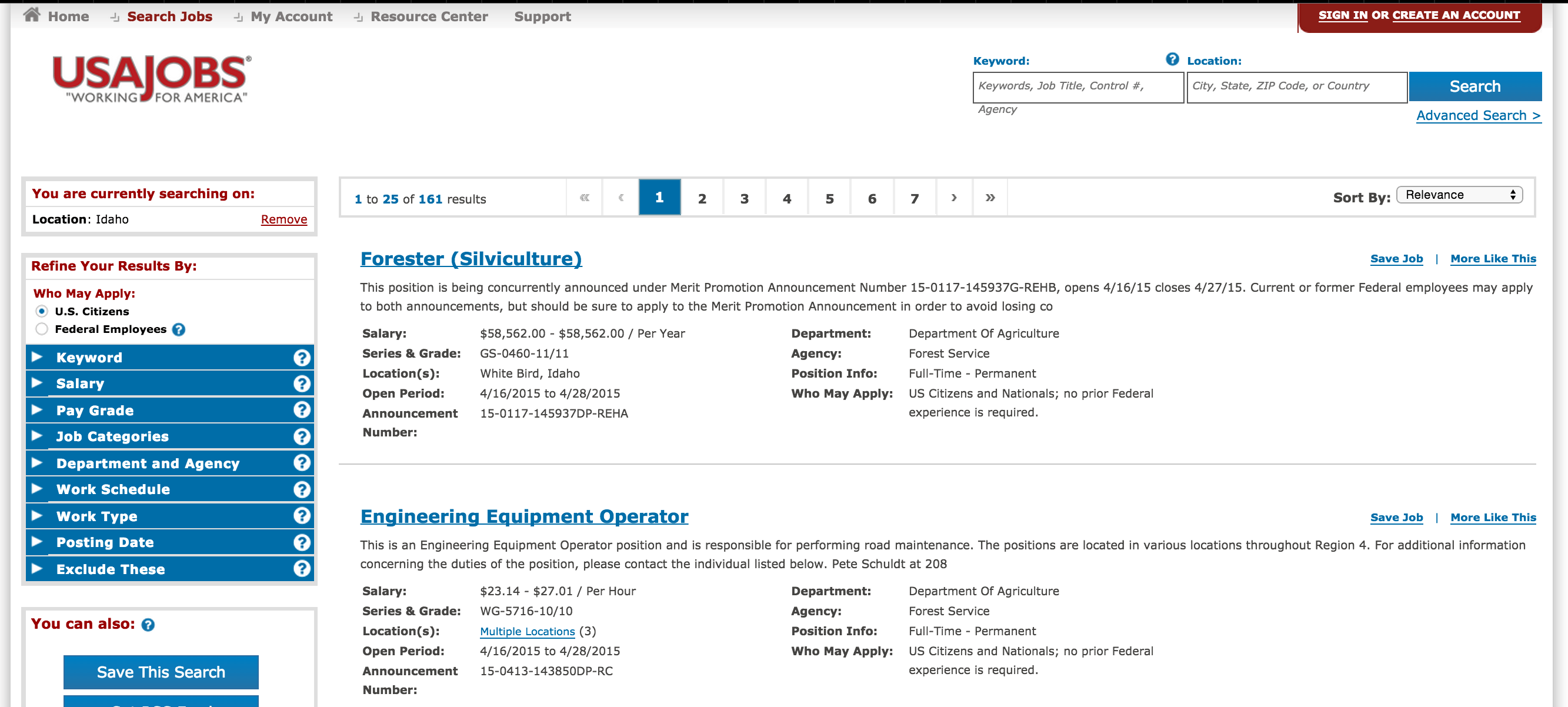

This is what the search results page looks like:

Take a look at the left sidebar and the filtering features it provides, including the ability to set exclusion filters (by keyword, by staleness of posting):

Job detail page

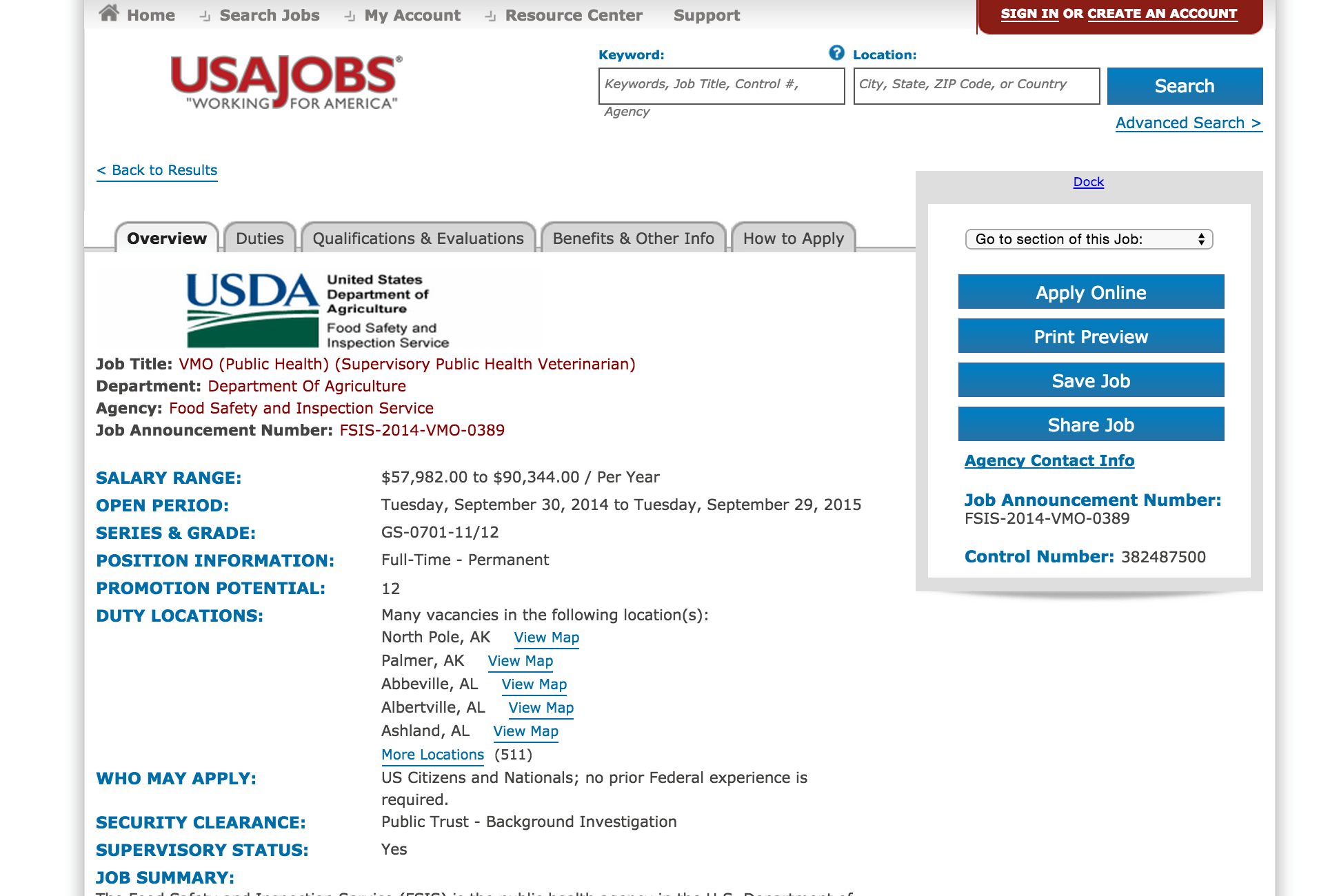

Each result has a details page; here's one for the job title of "VMO (Public Health) (Supervisory Public Health Veterinarian)"

(Take note of the use of 382487500 as an identifier.)

This particular position happens to be one not exclusive to Idaho, but is apparently hiring for as many as 511 different locations. It's not clear if each location needs just one veterinarian of if there are several veterinarian openings per city. One takeaway is that one job posting is replicated across several geographical regions; later on, as we attempt to download every job listing, it's somewhat-to-maybe-not-at-all relevant that job postings are not one-to-one with actual number of openings.

It's worth noticing the data points that are found in both the API and the website (job title, open period, pay scale and salary range). However, the version of the API that we'll use (the REST-based API) will be missing quite a few fields, notably the security clearance field and the wall of text for the job description (e.g. the Duties section and the degree requirements). The job detail webpage also has many features for logged-in users, such as being able to save and apply online to job listings; the REST API will not allow us to (easily) emulate such features.

The developer-friendly API

Now that we've seen how the front-facing website works, let's see how its content is made accessible through an API. The homepage for the API lives at data.usajobs.gov, but we care specifically about the REST-based API: data.usajobs.gov/Rest

(Side note: The SOAP-based API has more data but is significantly more complicated to parse. Also, it's apparently marked for removal in May 2015. Another side-note, it's not important right now to know about RESTful architectures; I simply use the term to distinguish between USAJobs.gov's offerings)

The most important section of the REST-based API documentation is under the tab titled "API Query Parameters". But before digging through that, let's look at a sample URL:

https://data.usajobs.gov/api/jobs?Title=Explosive

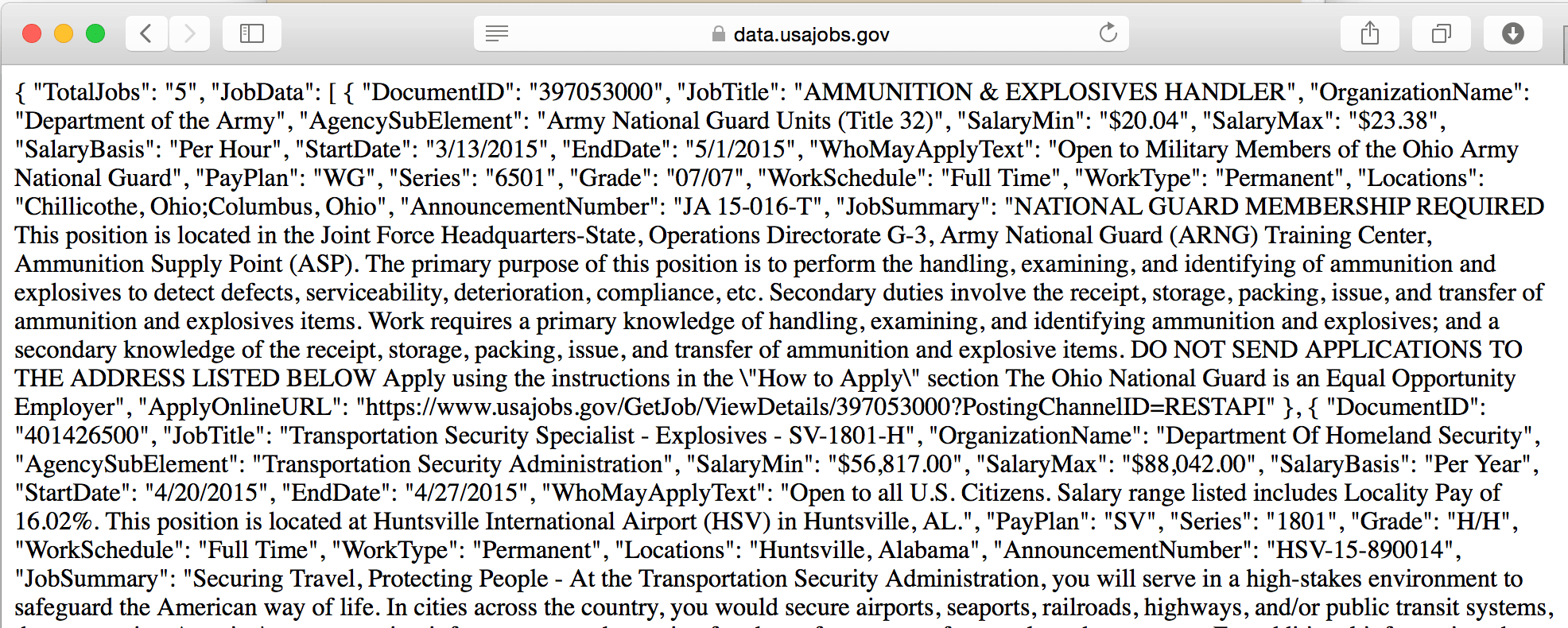

If you visit that URL in your browser, you'll either see a glob of plaintext, like this:

Or your browser might not attempt to render it all, and just download the file into your Downloads folder. Or you might see a basically empty file, because at the time you checked the API, there happen to be no jobs with "Explosive" in the title.

To see what I got, I saved a cached version of the data that you can view here. I've stashed a copy as a Github Gist, which may be easier to view:

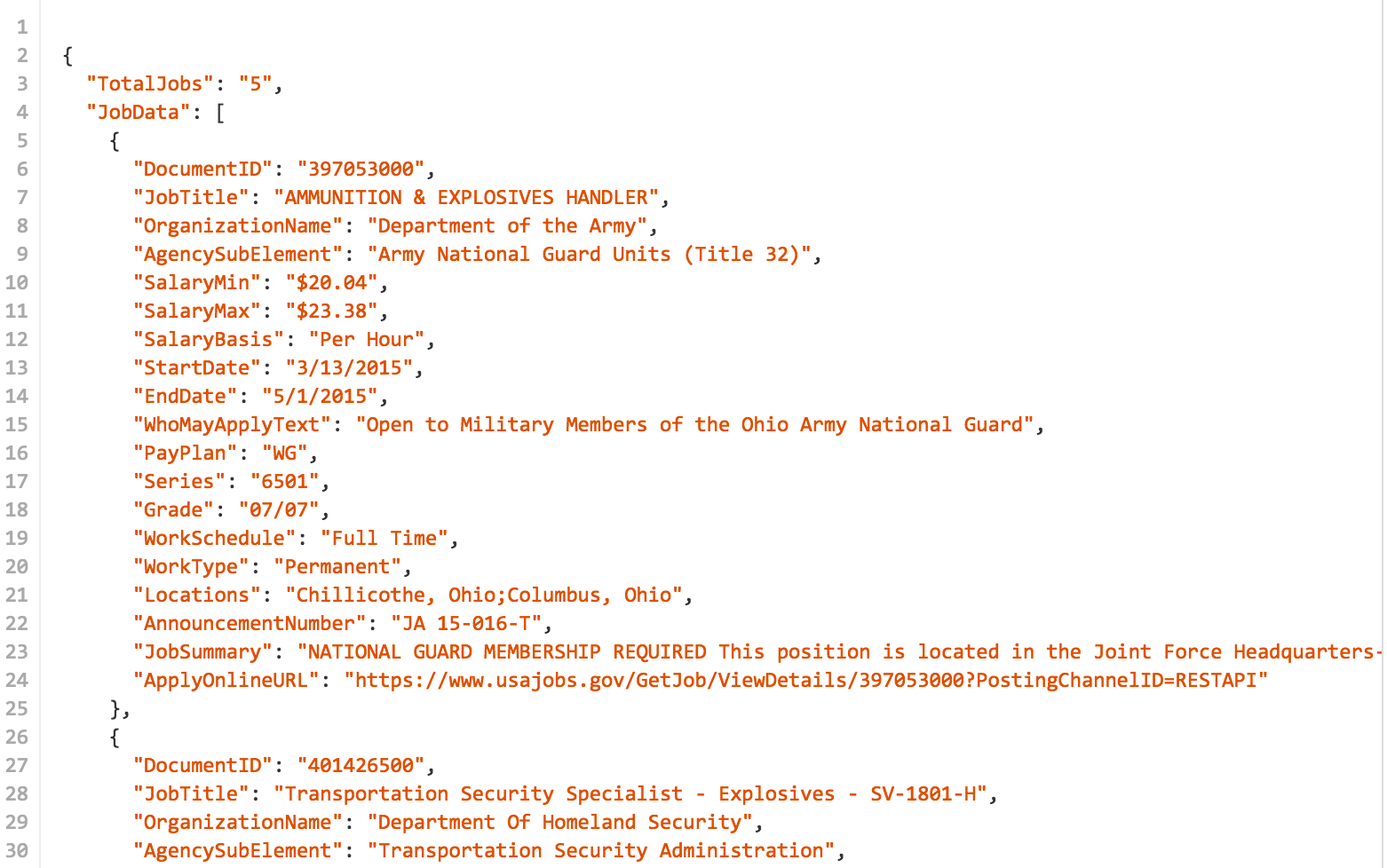

JSON for Humans

When it's properly formatted, the JSON data format is relatively easy to comprehend; indeed, it was designed as a way to send data as text that could be efficiently read by machines and humans alike. Even with knowing little about the format itself or the USAJobs API, you wouldn't be wrong in guessing that this data contains jobs that contain the word "Explosive" in their JobTitle (e.g. "AMMUNITION & EXPLOSIVES HANDLER") property. Or that when this search was made, 5 such jobs were open.

JSON is even more effective from the machine's perspective, as JSON-formatted text can be parsed by standard Python library functions and made into directly usable data structures, such as lists and dictionaries. JSON text even looks like the data structures that they get turned into:

The following is valid JSON in a text file. And if you paste it directly into your Python code (or iPython), it's recognized as a dictionary:

{"a": "Apple", "b": [1, 2, 3] }

The Query String

The API's base URL is:

https://data.usajobs.gov/api/jobs

Every query begins with this URL, though visiting it as is returns an empty response.

To get results, add a parameter, which is expressed as a key-value (or sometimes referred to as field-value) pair. In the example below, title is the key and director is the value; the equals sign is used to connect the key to its value:

title=director

Append this parameter to the base URL, and you'll get results for all open positions with director in the title. Note that the question mark is used to separate the base URL from its query string, i.e. its list of parameters:

https://data.usajobs.gov/api/jobs?title=director

Multiple parameters

If you want find jobs in the state of Nevada, you can either use the key/field of CountrySubdivision, or the more broad LocationName (which would presumably include jobs in Nevada City, California). To find director positions in the state of Nevada, you combine the two key-value pairs using an ampersand as a delimiter:

https://data.usajobs.gov/api/jobs?title=director&CountrySubdivision=Nevada

You can find the entire list of possible parameters in the REST API documentation, under the API Query Parameters tab.

The keys/fields most important to us are:

Page- a broad search can return as many as 5,000 results. By default, the API returns 25 results at a time. Increasing thePageparameter allows us to get through all of the listings.NumberOfJobs- by default, this is set to a value of 25 but can be increased to a maximum of 250, reducing the number of pages (i.e. URL requests) by a factor of 10.CountrySubdivision- This is used to specify a U.S. state by name. Currently, it does not support provinces/states in other countries, e.g. Ontario, Canada. A list of possible values can be found at schemas.usajobs.gov/Enumerations/CountrySubdivision.xmlCountry- This is used to specify a country by name. A list of possible countries can be found at schemas.usajobs.gov/Enumerations/CountryCode.xml. Apparently these are used elsewhere in the U.S. government, such as the IRS, which will cause us a huge inconvenience when we try to mix with systems (such as Google Charts) that use ISO codes.Series- A 4-digit number used by the federal government to categorize jobs, e.g. 1000 - 1099 is designated for Information and Arts, with 1015 being designated for Museum Curator positions.OrganizationID- Can be used to specify an organization, such asHEfor the Department of Health and Human Services, or more specifically,HE39for the Centers for Disease Control and Prevention. A list of codes Agency codes can be found here.

All other fields are less important for our data-gathering purposes. For example, it's harder to enumerate all the possible values for the Title, Keyword, and LocationName fields, though those would be useful if you were to build a service that passed along users' queries to the data.usajobs.gov API.

Limitations

While the API is fairly flexible, it doesn't appear to let us do queries with multiple values per field (i.e. return jobs in France and Germany) or other kinds of logical combinations (e.g. get jobs with this keyword but not that keyword).

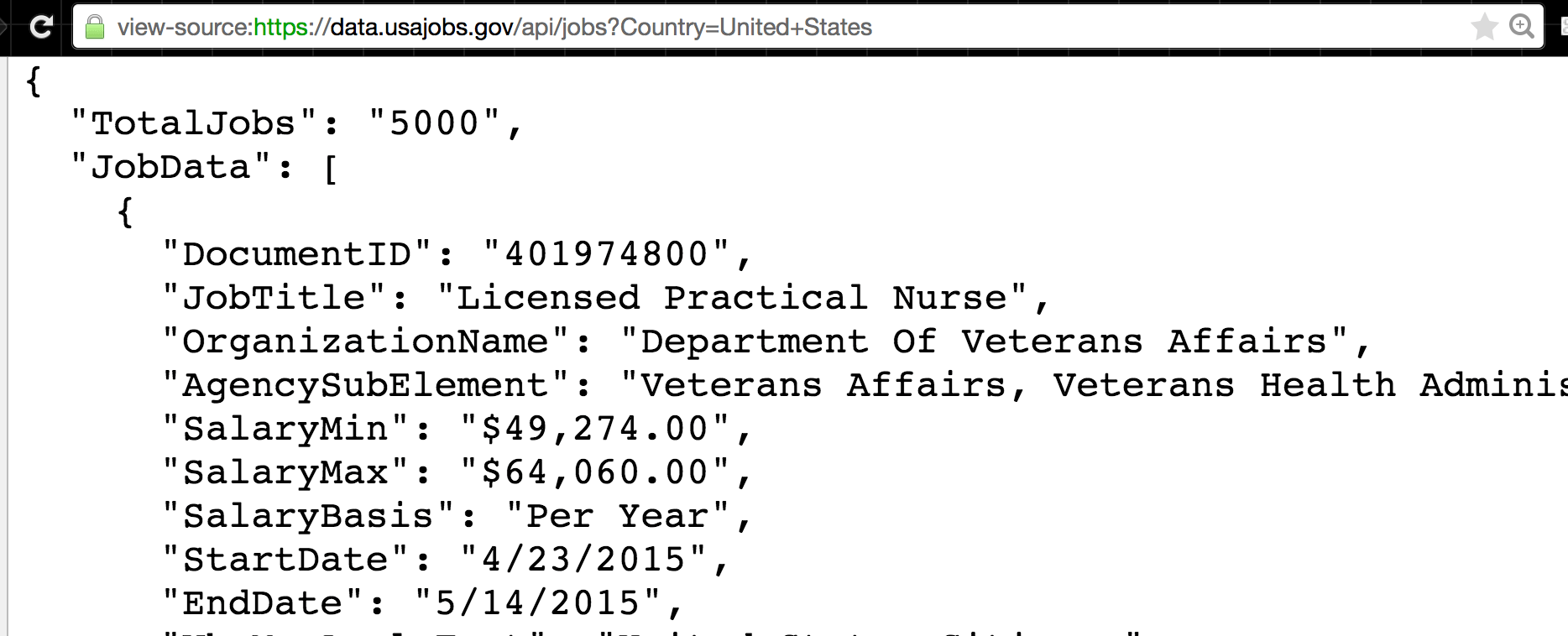

This limitation isn't a big deal because with programming, we can automate the collection of all the data. However, it's not as straightforward as just hitting up the system and iterating across 1,000 pages. Do a search for job listings using United States as the Country (quick note: URLs do not allow certain characters, such as space characters, hence, the plus sign):

https://data.usajobs.gov/api/jobs?Country=United+States

The result will look something like:

Are there exactly 5,000 job openings in the United States? Could be. But more likely, it's that there are more than 5,000 but the API will only return a maximum of 5,000 results – altering the NumberOfJobs parameter won't change that ceiling:

https://data.usajobs.gov/api/jobs?Country=United+States&NumberOfJobs=250

Thus, to get all job listings for the U.S., we will have to provide at least one other parameter, such as CountrySubdivision, and then iterate over all possible values, e.g. all 50 states and D.C. (actually, we can take out the Country parameter, since these subdivisions are by definition inside the U.S.)And of course, some of these individual states will have more than 250 listings, which will require adding the Page parameter. So if the state of California has 700 job openings, three separate API requests are necessary:

https://data.usajobs.gov/api/jobs?CountrySubdivision=California&NumberOfJobs=250

https://data.usajobs.gov/api/jobs?CountrySubdivision=California&NumberOfJobs=250&Page=2

https://data.usajobs.gov/api/jobs?CountrySubdivision=California&NumberOfJobs=250&Page=3

Play with Python

Let's briefly do what we've just done – accessing the API via browser – except via Python code. Don't worry if you don't understand most of it; just focus on the parts you do recognize. You can run this in iPython:

To download the first 25 job openings in California:

import requests

resp = requests.get("https://data.usajobs.gov/api/jobs?CountrySubdivision=California")

# at this point, download has completed, now convert it

# to a data structure

data = resp.json()

print("Total jobs:", data['TotalJobs'])

jobs = data['JobData']

print("First job title:", jobs[0]['JobTitle'])

The result should look something like (but not exactly):

Total jobs: 760

First job title: Automotive Mechanic Supervisor

And here's a preview of how writing all that code (eventually) allows us to easily increase the scope of our task:

import requests

baseurl = 'https://data.usajobs.gov/api/jobs?CountrySubdivision='

states = ['California', 'Texas', 'Nevada', 'Oregon', 'Maine', 'Iowa']

for s in states:

resp = requests.get(baseurl + s)

data = resp.json()

print("For state", s)

print(" Total jobs:", data['TotalJobs'])

jobs = data['JobData']

print(" First job title:", jobs[0]['JobTitle'])

Result:

For state California

Total jobs: 760

First job title: Automotive Mechanic Supervisor

For state Texas

Total jobs: 654

First job title: INTERDISCIPLINARY ENGINEER

For state Nevada

Total jobs: 199

First job title: Public Health Advisor (French)

For state Oregon

Total jobs: 249

First job title: Public Health Advisor (French)

For state Maine

Total jobs: 58

First job title: Public Health Advisor (French)

For state Iowa

Total jobs: 74

First job title: Licensed Practical Nurse - Primary Care Clinic

Hopefully you can see how this can be expanded to all 50 states, or all 190+ countries and territories.